This post is part of The Containerization Chronicles, a series of posts about Containerization. In them, I write about my experiments and what I’ve learned on Containerization of applications. The contents of this post might make more sense if you read the previous posts in this series.

EDIT:

I changed the title of the post from “Containerize dev, tst and prd” to “Containerize development” because that is actually my goal here, although I do create the production image here, and some ppl were getting confused, thinking about the CI test environment and the production environment we will deploy to.

After cleaning up the Symfony demo project a bit, we are ready to do some containerization…

I will talk about:

- Logging

- Containerizing development

- Run and stop the containers

- Remove database from VCS

- Integrate with PHPStorm

If you want to jump right into the code, this is the tag on GitHub.

1. Logging

The containers, while running, will output anything that is written to stdout.

In order to get the logs from the container, we will simply make sure the application writes its logs to stdout. This will make it super easy for us to see the logs while developing but, for production, it will also make it super easy to aggregate the logs of all containers and direct them into a centralized location. As I understand at this moment, Kubernetes has some functionality to do just this! I will get to that sometime in a later post.

For now, in our case we just need to add a bit of configuration to our application logger, Monolog:

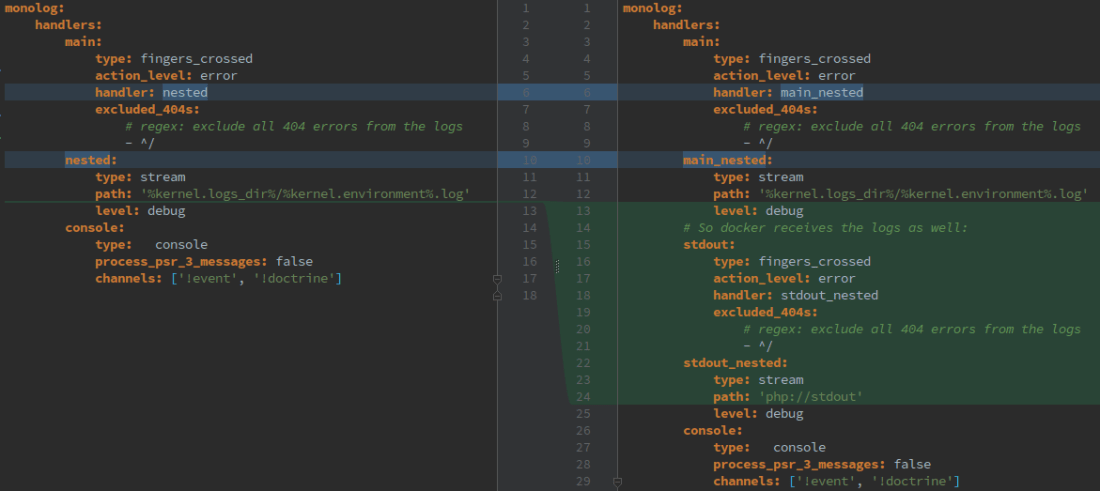

In the Monolog configuration for the development environment:

In the Monolog configuration for the production environment:

2. Containerizing development

The demo application is fairly simple and runs only with PHP, on PHP embedded web server and an SQLite file as the database. For this reason, we would be able to containerize the application using only Docker, however, since we want to extend the application to use an http server and a database server in the future, we will use docker compose from the start.

EDIT:

We are going to create 3 docker files, one for the base image, another for the production image which also contains the application, and another for the image that will be used during development and for running the tests while developing.

This could also be done with multi stage build, which uses only one docker file, but I will leave that for another post.

2.1 The base container image

We will be building a new production container image every time we release a new version of our application. However, the OS dependencies our production container has will be much more stable, they will change at a much slower pace than our application.

So, we will create a base container image with all those stable OS dependencies, so that we don’t need to install them every time we create a new production image.

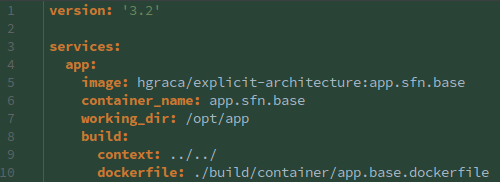

This docker compose file is very simple, it only defines the name of the image to be built and the docker file to be used to build it.

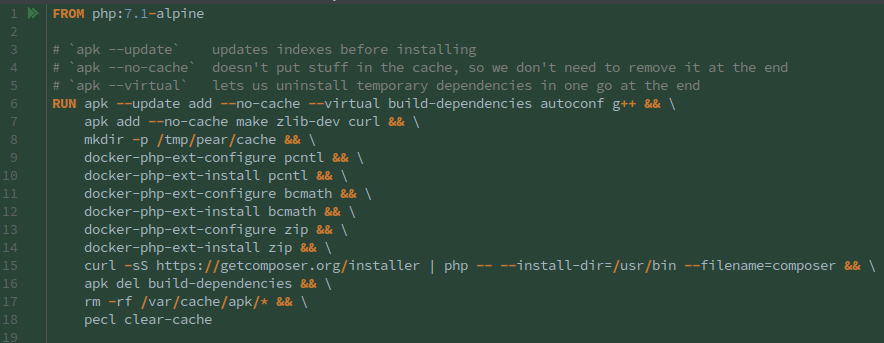

The dockerfile is more interesting. We base our image on the default PHP7.1 Alpine image, and we install all the OS related dependencies.

We install all the dependencies in one RUN command, so that it will only create one layer in the image, making it smaller. We start by updating the apk sources and ask it to install the dependencies.

We use apk two times, the first time to install the dependencies we can remove at the end of the process and the second time to install the dependencies we need to keep in the image.

The --no-cache flag tells apk to not cache the downloaded applications and the --virtual flag tells apk to tag the following dependencies with the tag build-dependencies, which will be very convenient at the end of the process to remove them in one go.

After installing all dependencies, we make sure we remove all cache and unneeded dependencies.

2.2 The production container image

The production image will be a self-contained image with all that is needed to run the application in production. This means it will contain all the application code and all its dependencies. Furthermore, the container entry point will be the command that brings the application up and makes it ready to receive requests.

As we are using SQLite as the database engine, the SQLite file will be a new one in every deployment. This is fine for now, we will add a Mysql container at some point in the future.

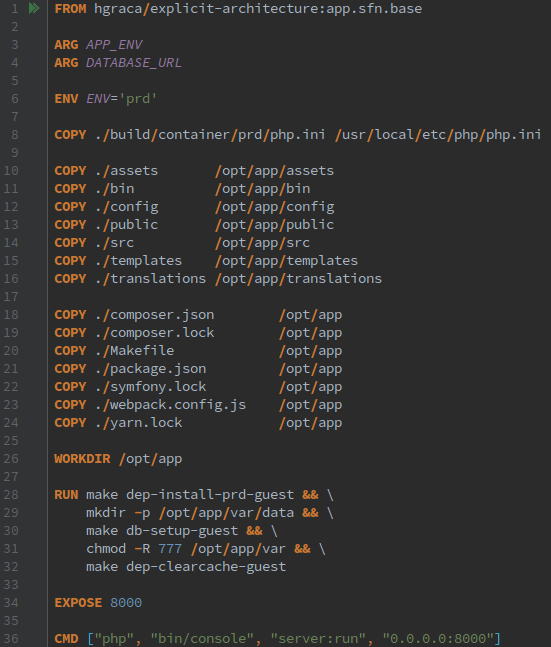

As we can see on the top, the docker image is based on the base image explained above.

The ARG lines tell docker that the variables APP_ENV and DATABASE_URL are required at the time of building the image, although this does not make it a requirement for running the container.

As, by default, this image is supposed to run in production we set the environment variable ENV to prd.

We copy, into the image, only what is needed to run the application in production. We take special attention to exclude the tests and build folders as in time they will add a considerable amount of space, and we set the workdir to the root of the application inside the image. Although the dockerfile is in a nested folder, we only need to specify the . as the source because this will be run by docker compose, which will set the context to the root of the project.

So far we just copied the application into the image, but now we also need to prepare it to run, which means install all its dependencies, for which we use a make command that we added to our makefile, and create the database that will be used. We also set the var folder permissions to 777 so that if we run the container with a non-root user we can still use that folder for caching, etc. In the end, we cleanup the composer cache because we don’t need it inside the image, so this way we make the image smaller.

We also copy our custom php config into the image, and we shouldn’t change it for other environments images so that we develop and test with the same config.

Finally, we set up the command to run as the default entry point to the container, which in this case it’s starting the php embedded http server to listen on port 8000.

EDIT: I was wrong about the EXPOSE directive, and updated the post accordingly. My thnaks to kkapelon for pointing it out to me.

The EXPOSE instruction will mark a port as being used by the service running in the container, making it available to other containers linked to it but not to the outside world, it is an internal port. We can start the container with the -P or -p flag, which will make the exposed port accessible on the host and to any client that can reach the host. Only exposed ports can be published. You can read more about it here and here.

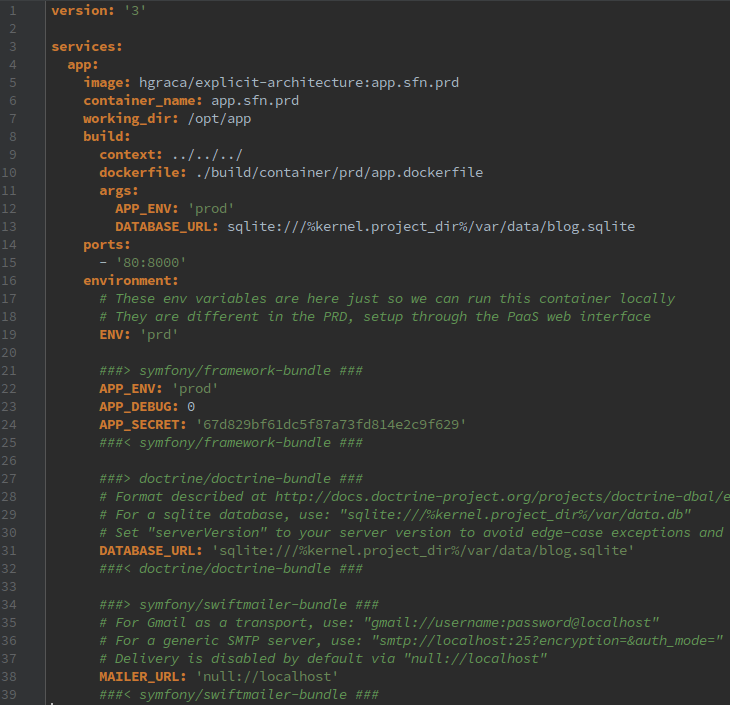

In the docker-compose file we set the version to 3 because we don’t need any higher and also, at this moment, PHPStorm is not compatible with higher versions.

We set the name of the image that we will create from this docker compose file, as well as the container name, and the working dir when the container is up. On the build section, we need to set the context to 3 folders above the folder of this file because that’s the project root, and then we need to reference the dockerfile from the project root. We also need to specify the ARG variables required by the dockerfile to build the image.

The remaining relevant configuration is the ports. We map the host port 80 to the container port 8000 because port 8000 is where the application is listening inside the container and port 80 is the default port used by http. This way, on the host we can access the application using http://localhost without specifying any port. If we would already be using the host port 80 for some other application, we would need to use another port instead, for example port 8080 and then we would reach the application as http://localhost:8080.

2.3 The development container image

The development image will be based on the production image. It will be used during development, as an always up server that we can use to test the application on a browser. For this, unlike the production image, it will have a volume mounted where the application code resides so that the container can access the code under development.

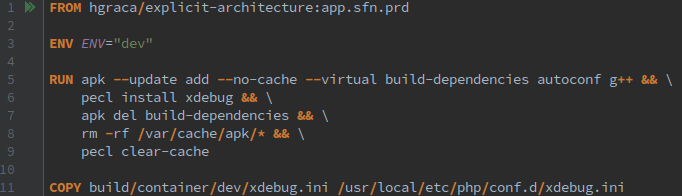

Part of what we can see in the dockerfile was already explained before so I won’t repeat myself. What is relevant at this point is that we want to have xdebug running while developing (we won’t have it turned on during testing, we will see that later) so we install xdebug using pecl. As it happens, to install xdebug we need g++, so we need to install it and then remove it at the end of building the image.

We also copy our xdebug config into the image, so that no matter how we bring the container up, we always have xdebug configured there.

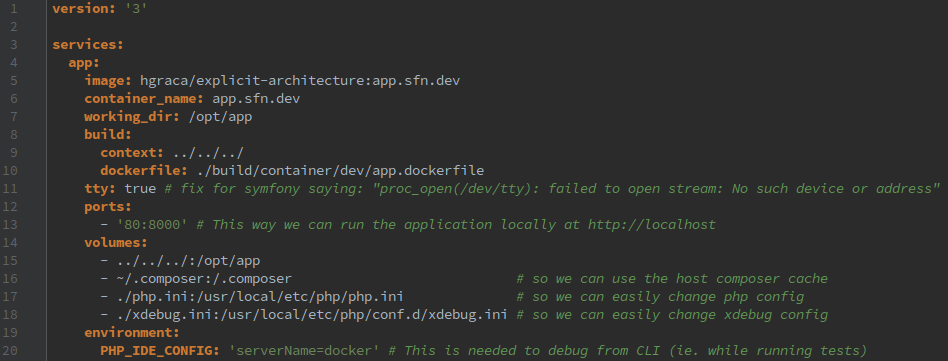

Most of what is in the dev docker-compose file is the same as before.

The relevant changes are that we need to have a tty available in the container so that we can run and test CLI commands and also because some of Symfony code used in dev relies on it. We also create a few volumes so that we can share files with the processes that run inside the container:

- the whole codebase;

- the composer cache, so we can update dependencies from within the container;

- the php configuration;

- the xdebug configuration.

2.4 The testing container image

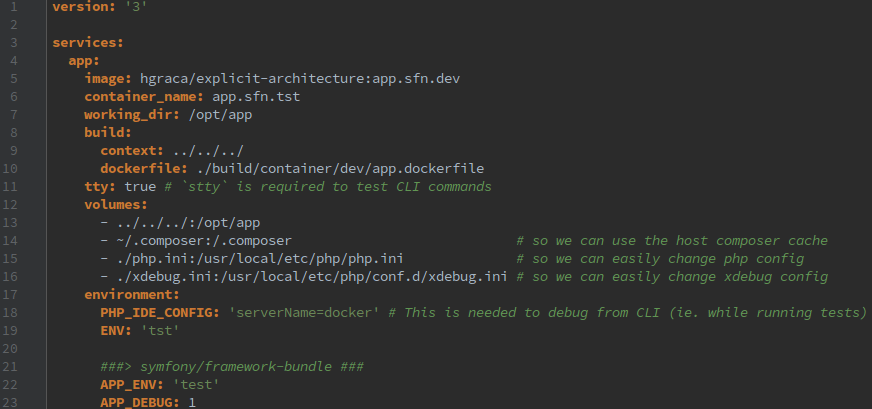

The testing image will be used during development, in this case, to run the test suite against the code under development. Just like the development image, it will have a volume where the code under development will be accessible to the container.

This container is needed so that running the test suite doesn’t inadvertently conflict with the running application, which could occur if we were only using the development image.

For the testing environment, we don’t even have a dockerfile, the image is exactly the same as the dev image, with some subtle exceptions in the docker compose file:

- The container name is different so that it will not clash with the dev container name, as we want to have the dev container up and run the tests in the test container simultaneously;

- The php.ini file is in the same folder as the testing docker compose file so we can easily tweak the configurations, for example, the available memory (keep in mind, though, that the config should be the same as in production so this should be used only for experimentation);

- The xdebug config file is also in the same directory as the testing docker compose file so that we can enable or disable it at will before running the tests, which is useful if we want to debug the tests.

3. Remove the database from VCS

This demo app is currently keeping the database in the VCS, as a sqlite file, which is not such a nice practice because everyone is constantly forced to download the DB from VCS, and roll back the changes every time after running the tests.

However, since from now on we use the makefile test, test_cov and up commands to test and start the application, we don’t need to keep the database in the VCS anymore because those commands will generate the DB if it is not present, so we remove those files from VCS.

4. Run and stop the containers

While we are developing our application we want an easy way of running and testing it. We don’t want to lose our focus trying to remember docker commands, or having to remove several stopped containers every once in a while.

Furthermore, the containers should be ephemerous, so we can safely remove them once we have used them.

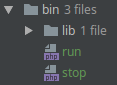

In order to improve our DevEx, we create a run and a stop commands, and further abstract them behind our makefile:

- The

runcommand:- allows us to start the container and related containers, and then run other commands against it;

- With no arguments, the container will be started and kept alive for 1h;

- With arguments, if the container is running it will send the commands to it and leave it running;

- With arguments, if the container is NOT running it will start it, send the commands to it and terminate it.

- The

stopcommand will simply stop all containers related to the docker compose file.

And then we further abstract them behind our makefile with a shorthand commands, like for example:

cs-fix:

ENV='tst' ./bin/run php vendor/bin/php-cs-fixer fix --verbose

db-setup:

ENV='dev' ./bin/run make db-setup-guest

dep-update:

ENV='dev' ./bin/run composer update

test:

- ENV='tst' ./bin/stop # Just in case some container is left over

ENV='tst' ./bin/run

ENV='tst' ./bin/run make db-setup-guest

ENV='tst' ./bin/run php vendor/bin/phpunit $(MAKE) cs-fix

ENV='tst' ./bin/stop

test_cov:

ENV='tst' ./bin/run phpdbg -qrr vendor/bin/phpunit --coverage-text --coverage-clover=${COVERAGE_REPORT_PATH}

5. Integrate with PHPStorm

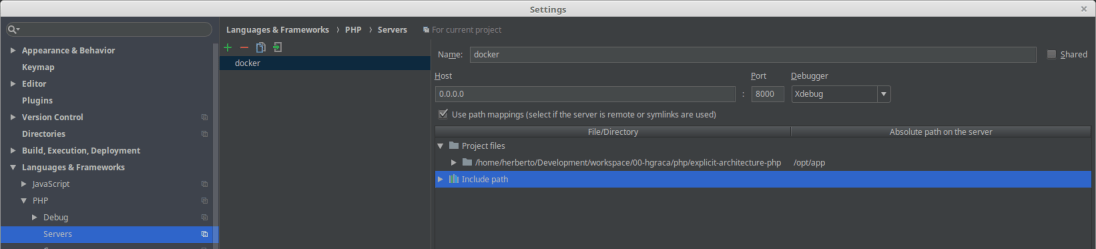

Integrating with PHPStorm becomes straightforward.

We configure the server so that we can debug from the browser:

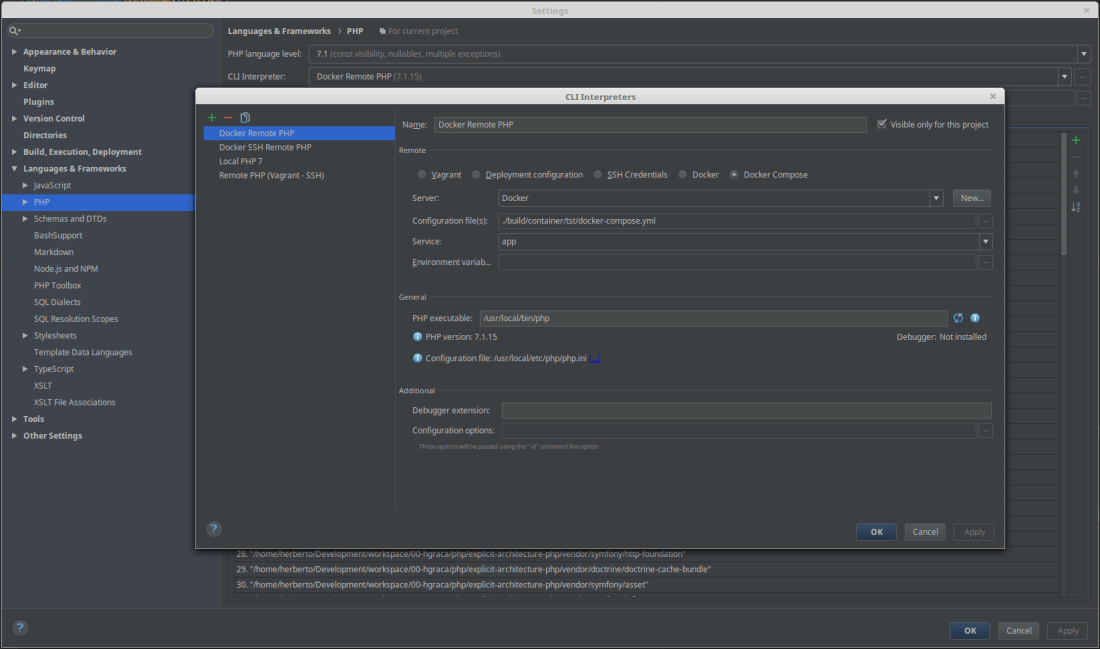

We configure the CLI runner so that we can run the tests suites:

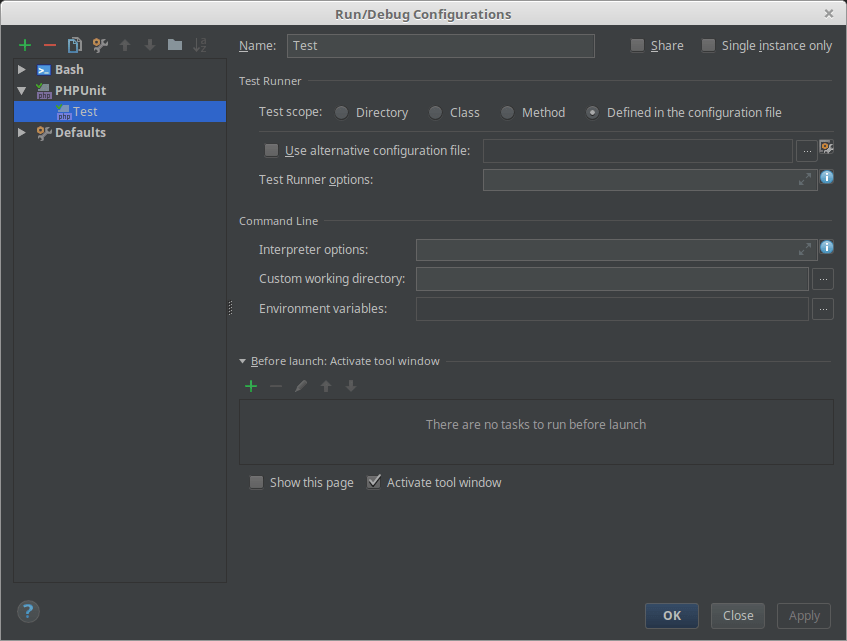

And finally we configure a test run:

I trust you are able to figure out how to run the tests and the debugger.

Well, if you reached this point in the post, congratzz!! 🙂

Please, feel free to share your thoughts and/or ways to improve this.

One thought on “Containerize development”